Solutions

Date

March 4, 2026

Reading time

6 Min

Author

Why your organization will likely resist AI transformation and call it strategy

When you observe what organizations like to call transformation, a remarkable and recurring asymmetry becomes visible. The transformation itself is seen as the risk. The risk of keeping what has proven to work is rarely seen and almost always underestimated.

The risk of keeping what has proven to work is almost always underestimated.

The current discussion about AI and organizations is no different. With one important distinction: in the context of AI, this asymmetry is potentially existential.

What we observe today is not a lack of information. Leaders generally know that markets are shifting, that AI is disrupting business models, that what worked yesterday will operate under different conditions tomorrow. The real problem lies deeper: in the asymmetry between what is visible from the outside and what is actually experienced as risk from within. Transformation steps feel risky because they generate decision costs – resistance, uncertainty, potential loss of control. Maintaining what is familiar does not generate these costs. At least not immediately. At least not visibly.

The real problem lies deeper: in the asymmetry between what is visible from the outside and what is actually experienced as risk from within.

Organizations don’t only make decisions. They also decide the basis on which future decisions will be made: which programs apply, which communication channels are legitimate, which roles hold which decision-making authority. Whoever sets these premises structures all subsequent decisions. This is, initially, a capability: complexity is reduced, the ability to act is preserved, cognitive energy is saved.

The problem arises when these premises become so self-evident that their contingency – their fundamental changeability – is no longer perceived. Niklas Luhmann called this “undecidable decision premises”: structures that no one recognizes as decisions anymore, because they were never explicitly decided but emerged silently as a byproduct of ongoing operations. They no longer appear as normative choices. They appear as givens.

In the context of AI, this observation takes on a particular quality. In most organizations I work with, the discussion runs along two axes: which processes can be automated or improved by AI, and how do we develop the competencies needed for this? Both are legitimate questions. But both also presuppose the existing organizational structure as a fixed frame within which AI is introduced. What is systematically excluded is the possibility that this structure itself is the problem. Not because it is bad, but because it was built for different requirements – for an environment where information processing was slower, where hierarchy was the only available filter mechanism.

When AI now processes and condenses information that previously had to travel through multiple layers of management, it doesn’t just make existing processes faster. It makes certain positions and structures obsolete in their current form.

This structural friction is not a theoretical construct; it manifests in the core mechanics of how value is generated and protected.

Refined Evidence: The Structural Friction of AI Integration

To ground the observation of “The Risk of the Familiar,” we can look at three distinct domains where the preservation of established decision premises creates a specific, measurable paralysis.

1. Professional Services and the Leverage Paradox

In the world of Professional Services, for instance, the “billable hour” is far more than an invoicing method. It is the invisible scaffolding of the entire organizational architecture. The traditional leverage model - where profit is generated through the high-intensity labor of junior associates - rests on the premise that value is inherently tied to the duration of cognitive effort. AI-driven document review and automated drafting do not merely “speed up” the process; they collapse the very time-horizon on which the firm’s profitability and junior training models are built. The risk here is found in the attempt to integrate AI into a structure that economically penalizes efficiency. When the revenue model remains linear while the production process becomes exponential, the firm faces a systemic paradox: to fully embrace the technology is to dismantle the economic basis of the partnership.

2. Corporate Governance and the Latency Trap

Similarly, in the Corporate Governance of large industrial conglomerates, decision-making is governed by a rhythm of formal committee cycles and board approvals. These are sophisticated mechanisms for uncertainty absorption, designed to manage liability, regulatory compliance, and the requirements of co-determination. The introduction of predictive AI and real-time risk dashboards creates a profound “latency trap.” While an algorithm may signal a necessary pivot in seconds, the organizational structure requires that this information be filtered through layers of reporting and eventually placed on a monthly board agenda. The formal governance structure, essential for legal and defensive purposes, becomes a bottleneck that prevents the organization from operating at the frequency the environment now demands.

3. The Mittelstand and the Social Defense of Expertise

Even in the Mittelstand, where “personengebundene expertise” - expertise inextricably bound to the individual - is the cornerstone of the social order, this friction is visible. In precision engineering, the “undecidable decision premise” is often the authority of the “Meister”- the expert whose intuition has been refined over decades. While computer vision and sensor-based systems can now detect deviations with a consistency that no human eye can replicate, replacing the expert’s final sign-off with an algorithmic validation is rarely a purely technical swap. It is a challenge to the informal power structure. The preservation of the manual inspection ritual is framed as “quality assurance,” but its actual function is to serve as a social defense against the loss of professional status.

Absorbing uncertainty

This leads us to a deeper layer of the problem: this is a form of uncertainty absorption in the wrong direction. Significant resources are invested in answering the question of how AI should be deployed - which tools, which processes, which governance - creating the reassuring feeling of managing the transformation. What remains untouched is the actually relevant uncertainty: whether the structures into which this technology is being embedded are still viable.

This is a form of uncertainty absorption in the wrong direction. Significant resources are invested in answering the question of how AI should be deployed – which tools, which processes, which governance - creating the reassuring feeling of managing the transformation. What remains untouched is the actually relevant uncertainty: whether the structures into which this technology is being embedded are still viable.

The reason this happens is not primarily a lack of insight. Organizational structures are not just work instruments. They are, in the language of psychoanalytic object relations theory, internalized holding systems. They provide orientation, create identity, regulate anxiety. For many people in leadership positions, their professional identity is closely intertwined with the structures in which they developed and which they helped shape. Questioning these structures is therefore not only a cognitive challenge – it is one that touches the self-image.

This explains the observable pattern: leadership adopts the language of transformation, fills presentations with announcements about the change that will now transform everything – while the decision architectures, reporting lines, and implicit power structures remain untouched. The reform appears to be happening, while preservation is the actual goal.

The Structural Shift: From Familiarity to Contingency

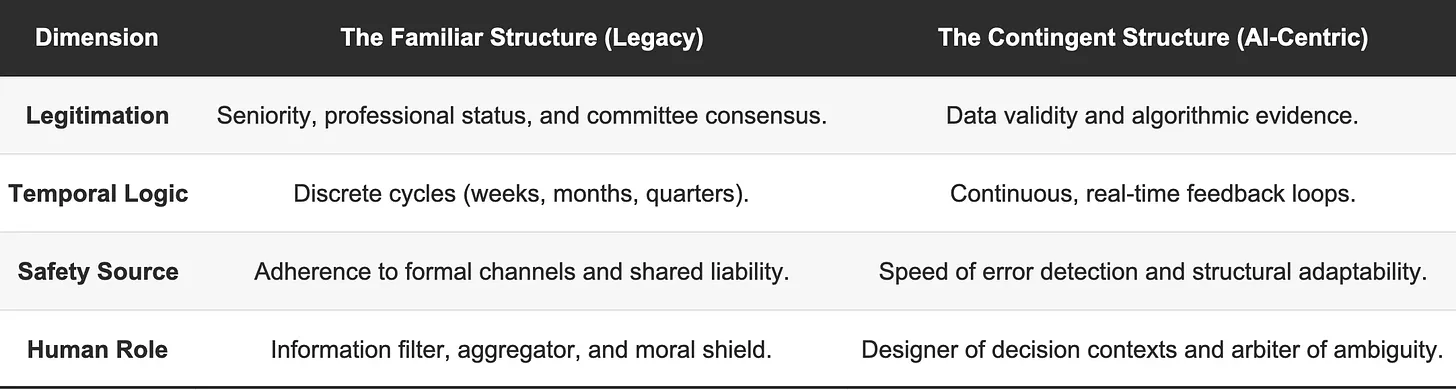

To move from the “Risk of the Familiar” to a viable AI-centric architecture requires more than a technical upgrade; it requires a fundamental shift in the underlying decision premises of the organization.

The question this raises for leaders is more uncomfortable than most strategic questions, because it cannot be answered from the outside: Which of our decision premises have we never explicitly decided – but treat as fixed, non-negotiable givens? Which structures exist solely because they once emerged – and would not be chosen today if we were starting from scratch?

Many of those who have read this far will question how realistic it is that established companies – with structures consolidated over decades – would now put these very structures up for discussion. I agree, it is not realistic for the majority of what are today called legacy organizations. The fear of one’s own inadequacy, vulnerability, and the wish of management to protect itself will, in most cases, outweigh the courage for genuine engagement, leading to exactly the self-contradictory and familiar standstill-transformations described above. The fact that it is not realistic does not, however, change the risk of the resulting self-abolition. New companies, unbound by legacy structures, may - sooner than anticipated - replace those competitors still captured by their own preservation in a new market reality.

For those willing to genuinely put their own decision premises up for revision, there is a real opportunity – and it is one of the most consequential strategic moves available right now: not to introduce AI into existing structures, but to redesign structures around what AI makes possible.

Dr Alexander Frühmann, LL.M. (Yale), EMC (INSEAD) is founding partner at Singularity.Inc. He works with boards and organizations on human-AI dynamics and the strategic cultural aspects of transformations. His expertise sits at the intersection of systems theory, organizational psychology, and strategic leadership.