Strategy

Date

Reading time

5 min

Author

A short read on why most AI transformations are not failing for the reasons leaders think they are.

—

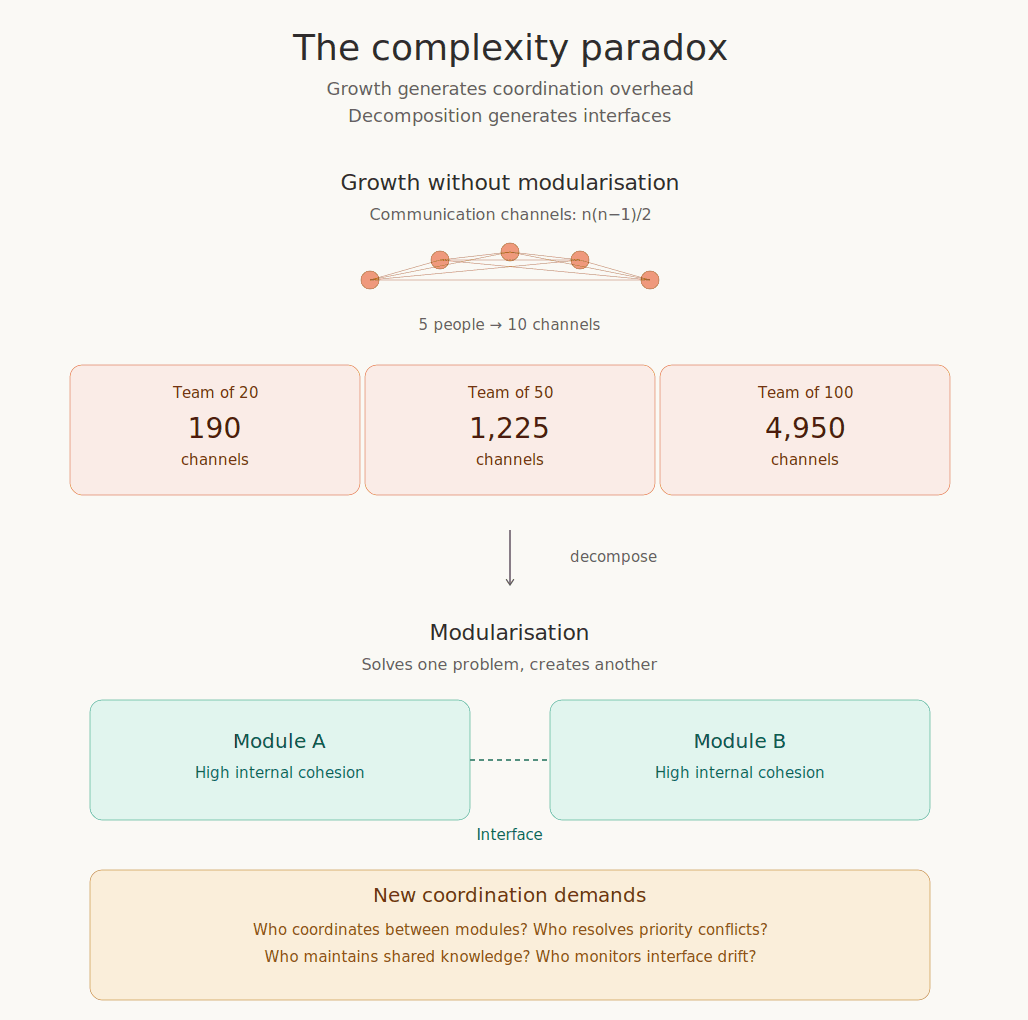

In 1975, Frederick Brooks published a slim book about why large software projects fail. The Mythical Man-Month became a classic for one observation: adding people to a project creates communication channels that grow not linearly, but quadratically, by the formula n(n−1)/2. A team of five has 10 channels. A team of twenty has 190. A team of fifty has 1,225.

Brooks was writing about software, but the principle has nothing to do with code. The same arithmetic plays out in law firms, hospitals, consulting partnerships, and multinational corporations. Beyond a certain threshold, the internal connections of a system overwhelm anyone's capacity to manage the whole.

The instinctive response is decomposition: divide, decouple, draw clear boundaries. Herbert Simon showed in 1962 why this works. His parable of the watchmakers Hora and Tempus illustrated it: Hora built his watches in stable subassemblies and could absorb interruptions. Tempus assembled his as a continuous sequence and lost everything when interrupted. Hora prospered. Tempus went out of business. Simon called this property near-decomposability — concentrating interdependence into zones of high internal cohesion, with controlled and minimal connections between them.

The trouble is that decomposition does not eliminate complexity. It transforms it. Every boundary you draw creates two things at once: clarity about what is inside, and a new interface that must be actively managed.

The Trade-Off

Martin Fowler has spent years documenting this dynamic in software. Organizations that aggressively decomposed their systems into microservices have, in many cases, started to selectively reconsolidate. The reason is structural, not technical. Distributed systems are harder to coordinate than they look. The operational complexity of managing dozens or hundreds of loosely coupled services requires a level of organizational maturity that many teams simply do not have.

The same pattern plays out in organizational design. A growing professional services firm decides to reorganize from a single pool of consultants into three specialized practice groups. The motivation is sound: deeper expertise, less internal friction, faster client work. But the reorganization immediately generates new demands. Who coordinates when an engagement requires capabilities from two practice groups? How are shared resources allocated? Who maintains the knowledge that used to flow freely across the undivided firm?

Conway's Law, formulated in 1968, captures this well: organizations that design systems are constrained to produce designs that mirror their own communication structures. The boundaries you draw are not just technical or functional. They reflect who talks to whom, who trusts whom, and where power sits.

There is, in other words, a zone between too much and too little decomposition. The optimal degree of decomposition is not a universal constant. It is a function of the specific system's dependency structure, cognitive capacity, and environmental volatility.

Why AI accelerates the complexity

When organizations introduce AI into their operations, they are not simply adding a tool, but a new category of actor into a system that was already struggling with coordination.

AI multiplies the nodes in the network. Every specialized agent - for marketing, for risk, for revenue, for operations - represents a new actor with its own interfaces, its own data requirements, its own failure modes, and its own oversight needs. McKinsey describes a horizon in which realizing ROI from agentic AI requires organizations to activate thousands of agents enterprise-wide. Apply Brooks's arithmetic to that landscape and the coordination overhead becomes staggering.

The instinctive response, again, is modularization: specialized agents, bounded domains, well-defined APIs. This is sound engineering. It is also where the paradox accelerates. Every specialized agent creates a new interface that needs to be managed. The answer, increasingly, is more agents - orchestration agents, guardian agents, monitoring agents. MIT Sloan and BCG, in their 2025 research on the agentic enterprise, describe organizations building layered systems of agents that supervise other agents. This is modularization generating new interfaces generating new modules generating new interfaces, at machine speed.

What makes this particularly treacherous is that AI adoption tends to happen unevenly. Individual employees and forward-leaning teams adopt AI tools rapidly, while governance structures and coordination frameworks lag far behind. Conway's Law gets a new twist: the communication structure of the organization is no longer shaped only by human relationships. It is shaped by a patchwork of human teams, AI tools adopted bottom-up without central coordination, and enterprise AI systems deployed top-down with unclear boundaries. The system architecture that emerges is, predictably, incoherent.

The psychological defense beneath the language

In my coaching work with senior leaders, what strikes me most is how rarely the conversation is actually about technology. The language is mostly technical. I hear about "implementation challenges," "change management," "governance frameworks." But underneath sits the question "if AI can handle parts of what I do, what is my role in this new structure?".

This is uncomfortable enough for an individual. Multiplied across an entire leadership team, it produces a system-wide defense that manifests as endless pilot programs, postponed decisions, and a peculiar form of magical thinking in which AI is simultaneously the solution to everything and the responsibility of nobody.

Modularization, viewed from this angle, is rarely a purely rational design choice. It is also a way of distributing or avoiding anxiety. Leaders who push through AI-driven reorganizations often underestimate the new problems these will create, because acknowledging those problems would undermine the narrative that drove the investment. The governance model gets designed too late, or not at all. The interface protocols are left vague. The coordination costs are treated as teething problems rather than structural features. When the predictable difficulties appear, they are met with surprise rather than recognition.

What working with this new mess actually looks like

There is no architecture that resolves the complexity paradox. There is only the discipline of working with it. Three principles tend to separate the organizations that navigate this well from those that do not.

First, AI adoption should follow the same logic as any other form of modularization: deliberate, incremental, and respectful of the interfaces it creates. The instinct to deploy AI across the entire organization simultaneously almost always overshoots the mark. Better to identify the specific clusters of activity where AI adds genuine value with manageable interface costs, and to let the boundaries between human and machine work stabilize before extending the pattern further. Dave Snowden's framework for complex domains - probe, sense, respond - is closer to the right posture than any comprehensive transformation roadmap.

Second, interfaces deserve as much design attention as the capabilities themselves. This is where most organizations underinvest, especially with AI. The internal workings absorb attention: model selection, training data, prompt engineering. But the spaces between the AI and the rest of the organization - the protocols for when a human overrides a machine recommendation, the feedback loops that keep an agent's outputs aligned with changing context, the escalation paths when something goes wrong - are often left to emerge organically, which usually means they emerge badly.

Third, leaders need to develop a tolerance for the irreducible messiness of the trade-off. There is no configuration in which both problems - internal tangle and interface overhead - disappear. The skill is in reading the signals and adjusting continuously, without the fantasy that a final, stable architecture is waiting to be found.

Simon's watchmaker Hora did not build a perfect watch. He built one that could survive interruptions. That is the more realistic ambition for leaders navigating AI integration: not to eliminate complexity, but to arrange it in a way that the system can absorb disturbance without collapsing.

The organizations that will navigate this well are not the ones with the most sophisticated AI. They are the ones whose leaders understand that every new capability they introduce is also a new interface they must manage and who have the patience and the intellectual honesty to keep adjusting the balance rather than pretending they have found the answer.

—

This is a short version. The full essay - with extended argumentation, sources, and footnotes - is available at Alexander's personal website https://fruehmann.eu/the-complexity-paradox.

Dr Alexander Fruehmann is a co-founder a co-founder of Singularity.Inc, an AI strategy consultancy operating in Austria and Germany.